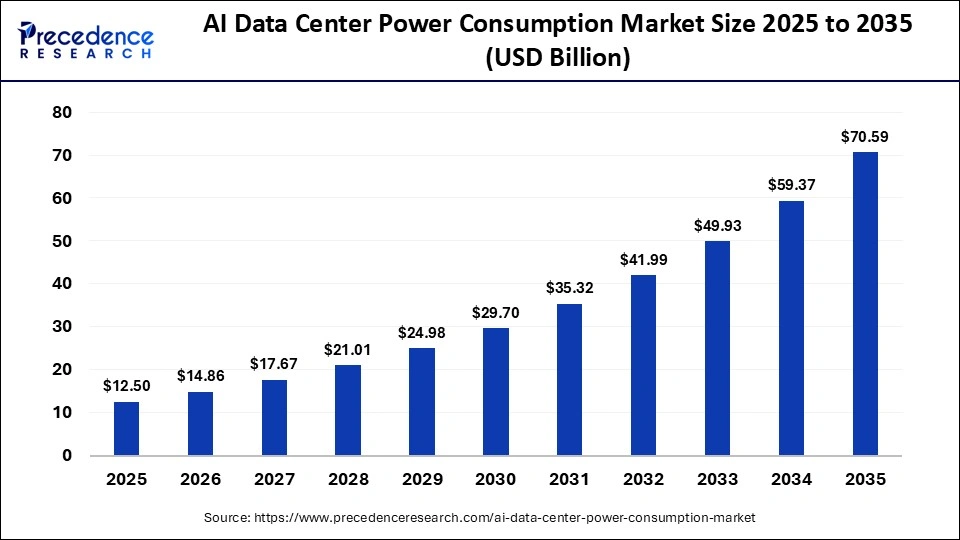

The global AI data center power consumption market is entering a phase of unprecedented expansion as artificial intelligence workloads reshape global digital infrastructure. According to Precedence Research, the market is projected to rise from USD 14.86 billion in 2026 to approximately USD 70.59 billion by 2035, expanding at a CAGR of 18.90% from 2026 to 2035.

This rapid growth is being fueled by the exponential rise in generative AI models, large language models (LLMs), GPU-intensive training workloads, and hyperscale cloud deployments, all of which demand significantly higher energy consumption compared to traditional data center operations. As AI adoption accelerates across industries, power optimization, cooling efficiency, and energy infrastructure upgrades are becoming mission-critical priorities for global enterprises.

Quick Insights – AI Data Center Power Consumption Market

- Market expected to reach USD 70.59 Billion by 2035

- Growing at a strong CAGR of 18.90% (2026–2035)

- North America dominates with ~42% market share (2025)

- Asia Pacific projected to grow fastest (~21.5% CAGR)

- Hyperscale data centers account for ~45% of total demand

- Cooling systems represent the largest component segment (~30% share)

- Liquid cooling systems expected to grow at ~24.5% CAGR

- Generative AI workloads are the fastest-growing application segment

- IT & telecommunications remains the leading end-use industry

Why Is AI Driving Massive Data Center Power Consumption?

The AI data center power consumption market is fundamentally driven by the computational intensity of modern AI systems, especially deep learning and generative AI models. Training large models requires thousands of high-performance GPUs operating continuously, significantly increasing electricity demand across compute, storage, and networking layers.

Additionally, AI workloads generate extreme heat densities, requiring advanced cooling systems that further increase overall energy consumption. This has positioned power efficiency as one of the most critical challenges in modern data center design.

How Is AI Transforming Data Center Energy Infrastructure?

Artificial intelligence is not only a driver of power demand but also a solution for optimizing it. AI-based energy management systems are increasingly being deployed to monitor and regulate power usage in real time, enabling predictive load balancing and dynamic cooling adjustments.

In addition, AI-driven analytics help operators forecast workload spikes and optimize GPU utilization, reducing energy waste while improving operational efficiency. This dual role of AI—both as a consumer and optimizer of energy—makes it central to the future of data center infrastructure evolution.

What Are the Key Growth Drivers of the Market?

The AI data center power consumption market is expanding due to several structural shifts in global computing demand:

- Rapid adoption of generative AI and LLMs across industries

- Increasing deployment of hyperscale cloud infrastructure

- Rising demand for real-time AI inference services

- Growing dependence on GPU-accelerated computing clusters

- Expansion of 5G, IoT, and edge AI applications

- Rising need for continuous, high-density compute workloads

These factors collectively intensify electricity consumption across global data center ecosystems.

What Opportunities Are Emerging in the Market?

Why Is Liquid Cooling Becoming a Major Opportunity?

Liquid cooling systems are rapidly replacing traditional air cooling due to their superior ability to handle high-density GPU clusters. As AI workloads generate significantly higher heat loads, liquid cooling is becoming essential for maintaining performance efficiency.

How Is Edge AI Creating New Power Demand Patterns?

Edge AI deployments are decentralizing computing infrastructure, increasing the need for smaller, distributed data centers with localized power optimization systems. This shift is opening new opportunities in modular power management solutions.

Why Are Hyperscale Data Centers Expanding So Rapidly?

Hyperscale operators are scaling aggressively to support AI model training and deployment, leading to massive investments in power infrastructure, energy storage, and grid integration technologies.

Regional Analysis – Where Is Growth Accelerating?

Why Does North America Lead the Market?

North America dominates due to early adoption of AI technologies, strong hyperscale cloud providers, and large-scale investments in AI infrastructure by major technology companies. The region continues to serve as the global hub for AI innovation and deployment.

Why Is Asia Pacific the Fastest-Growing Region?

Asia Pacific is expected to grow at the fastest rate due to rapid digital transformation, expanding AI adoption in China and India, and increasing investments in data center infrastructure and semiconductor ecosystems.

What Is Europe’s Role in the Market?

Europe is witnessing steady growth, driven by regulatory focus on energy efficiency, sustainable computing initiatives, and increasing AI integration across industrial sectors.

Who Are the Key Players in the Market?

The competitive landscape includes global technology leaders and infrastructure providers focused on energy optimization and AI-ready data centers:

- Microsoft

- Amazon Web Services

- Meta Platforms

- NVIDIA

- IBM

These companies are heavily investing in AI infrastructure scaling, liquid cooling technologies, energy-efficient GPUs, and advanced data center architectures.

Get Sample Link: https://www.precedenceresearch.com/sample/8318