Artificial intelligence is often framed as a race for more powerful models. In reality, the defining constraint of the AI era is not intelligence but infrastructure.

As AI systems move from experimentation to production, the question is no longer how large models can become, but how efficiently they can be deployed, scaled, and sustained. The future of AI will not be determined by raw compute alone, but by how intelligently that compute is designed, distributed, and optimized.

From the perspective of building AI native platforms, the bottleneck is increasingly clear. Performance without efficiency is no longer viable.

At the semiconductor level, this shift is already underway. General purpose computing architectures are being replaced by highly specialized systems designed for parallel workloads. GPUs, TPUs, and custom accelerators are not just incremental improvements. They represent a structural shift toward domain specific computing.

However, the next phase of innovation will not come from simply adding more accelerators. It will come from how these systems are orchestrated. Heterogeneous computing, where CPUs, GPUs, NPUs, and specialized chips operate cohesively, is emerging as the dominant paradigm. This allows workloads to be dynamically allocated based on their characteristics, significantly improving both throughput and energy efficiency.

Equally critical is the role of memory. Modern AI systems are increasingly constrained not by compute, but by data movement. High bandwidth memory and advancements in memory proximity are reducing latency and minimizing energy intensive data transfers. In many cases, optimizing how data moves through a system delivers greater efficiency gains than increasing compute capacity itself.

Beyond silicon, data centers are undergoing a fundamental redesign. The traditional model of scaling horizontally with general purpose servers is no longer sufficient for AI workloads. Instead, we are seeing the rise of high density compute clusters purpose built for training and inference.

This evolution introduces new challenges, particularly around power and thermal management. Cooling has become a first order problem. Air based systems are approaching their limits, giving way to liquid cooling and immersion techniques that enable significantly higher compute density while reducing energy overhead. These innovations are not just about performance. They are about making large scale AI systems economically and operationally viable.

Yet hardware alone does not solve the efficiency problem. Software is an equally powerful lever. Techniques such as model quantization, pruning, and intelligent workload scheduling are enabling meaningful reductions in computational demand without sacrificing performance. In many cases, efficiency gains are achieved not by scaling infrastructure, but by using it more intelligently.

While much of the focus in AI infrastructure is placed on compute and hardware, an equally critical layer is operational intelligence. These are the systems that manage, allocate, and optimize resources in real time.

This is where traditional enterprise systems, particularly ERP and supply chain platforms, are undergoing a quiet but significant transformation. Historically designed for financial tracking and resource planning, these systems are evolving into real time orchestration layers for increasingly complex infrastructure environments.

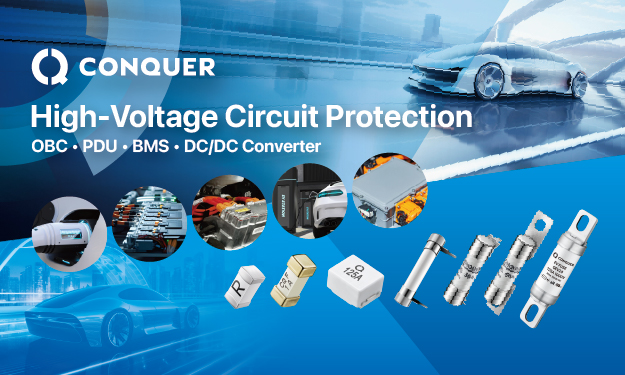

In the context of AI, this shift is particularly important. The global supply chain for semiconductors, GPUs, and specialized hardware remains highly constrained and sensitive to disruption. Events such as the COVID 19 pandemic and ongoing geopolitical shifts have exposed the fragility of single source dependencies. As a result, multi sourcing strategies, dynamic inventory buffers, and predictive supplier risk modeling are becoming essential.

Modern ERP systems, when augmented with AI, are enabling real time visibility into these supply chains. They can track component availability, forecast demand spikes, and optimize procurement decisions. This level of intelligence is critical in ensuring that infrastructure scaling is not limited by upstream constraints.

At the data center level, ERP like orchestration is also extending into resource management. From tracking GPU utilization to optimizing energy consumption across clusters, these systems are increasingly acting as the control layer that determines how efficiently infrastructure operates.

In many ways, the future of AI infrastructure will depend not just on how powerful systems are, but on how intelligently they are managed. ERP and supply chain platforms, once seen as back office tools, are becoming central to this transformation by bridging the gap between physical infrastructure and intelligent operations.

The broader semiconductor ecosystem is also adapting to this shift. We are moving away from a one size fits all approach toward a layered ecosystem of specialized hardware tailored for distinct use cases, from hyperscale training clusters to edge inference devices. This specialization is essential as AI expands beyond centralized data centers into real world environments.

Edge computing, in particular, will play a pivotal role in the next phase of AI adoption. By moving inference closer to the point of data generation, edge AI reduces latency, lowers bandwidth requirements, and distributes computational load more efficiently across networks. This not only improves performance but also alleviates pressure on centralized infrastructure.

However, as AI infrastructure scales, sustainability becomes impossible to ignore. The energy demands of modern AI systems are growing at an unprecedented rate, placing significant strain on global power resources. Hyperscale data centers are already among the largest consumers of electricity, and this trajectory is accelerating.

Addressing this challenge requires a shift in how we think about performance. The most meaningful metric is no longer raw compute, but performance per watt. This is driving innovation across the stack, from energy aware chip design to renewable powered data centers and intelligent workload orchestration that aligns compute demand with energy availability.

Sustainability is no longer a constraint to be managed. It is a design principle. Data centers are increasingly being built around renewable energy sources, while operators are adopting strategies that dynamically optimize energy usage in real time. The intersection of efficiency and sustainability will define the next generation of AI infrastructure.

Looking ahead, several emerging technologies have the potential to reshape this landscape. Advanced packaging techniques such as chiplets are enabling more scalable and efficient system architectures. Photonic computing, while still nascent, offers a path toward dramatically lower energy consumption for certain workloads. Meanwhile, next generation memory technologies will continue to reduce the cost of data movement, which remains one of the most significant contributors to inefficiency in AI systems.

Perhaps the most important shift, however, is architectural. The future of AI infrastructure will not be centralized or distributed. It will be hybrid. Intelligence will exist across a spectrum, from hyperscale data centers to edge devices, working in coordination to optimize both performance and energy usage.

Ultimately, the companies that succeed in the AI era will not be those that simply build the largest models, but those that build the most efficient systems. Scale without efficiency is unsustainable from an economic, operational, and environmental standpoint.

The real challenge of the AI era is not just to build more powerful systems, but to build systems that are fundamentally smarter in how they consume and manage resources.

In that sense, infrastructure is no longer a backend concern. It is the foundation upon which the future of artificial intelligence will be defined.